On Tuesday, a New Mexico jury ordered Meta to pay $375 million in civil penalties for misleading consumers about platform safety and enabling harm against its users, including child sexual exploitation. The jury deliberated for about one day.

That speed says something. Seven weeks of evidence, one day to decide.

The case, brought by the New Mexico attorney general in December 2023, is the first bench trial to find Meta liable for acts committed on its platform. It is also something else: one of the most detailed public records we now have of what happens inside a large platform when safety warnings are raised, documented, and then set aside.

For anyone running a platform, that record is worth reading carefully. Not because Meta is uniquely bad, but because the decisions that led to this verdict are not unusual ones. They are the kinds of decisions that get made every quarter, in product meetings and budget conversations, at companies of every size.

A Company That Knew

The New Mexico attorney general’s office spent almost seven weeks presenting evidence. What emerged was not a story about a few bad actors slipping through the cracks. It was a story about a company that knew, was told repeatedly by its own employees and external child safety experts, and kept going anyway.

Internal documents showed that warnings about harmful conditions on Meta’s platforms were raised consistently. Those warnings did not change the direction of the company. Meta executives, including Mark Zuckerberg and Instagram leader Adam Mosseri, testified that harm to children was essentially inevitable given the scale of their platforms.

That framing deserves to sit with you for a moment.

The argument was not “we tried and failed.” It was closer to “platforms this large will always produce this kind of harm, and that is simply the cost of operating at scale.” A jury of ordinary people heard that argument and disagreed, in about a day.

There is also a more specific failure worth examining. Witnesses from law enforcement and the National Center for Missing and Exploited Children testified that Meta’s approach to AI moderation had generated high volumes of what investigators called “junk” reports. These were automated flags that were too vague, too numerous, and too poorly calibrated to be useful. Law enforcement could not act on them. According to witness testimony, crimes went uninvestigated as a result.

Deploying automation without proper calibration, human oversight, or quality controls does not make a platform safer. It creates the appearance of action while actual harm continues underneath.

Meta’s 2023 decision to encrypt Facebook Messenger added another layer to this. Encryption is a legitimate privacy tool. But rolling it out without building compensating safety mechanisms meant that one of the primary channels predators used to groom children became effectively invisible to investigators. A product decision was made. Law enforcement lost access to evidence.

The Legal Ground Is Shifting

For years, platforms have operated under a reliable assumption: Section 230 of the Communications Decency Act protects them from liability for content created by their users. It has been the legal foundation of the internet as we know it, and it has allowed platforms to scale without taking on full responsibility for what happens on them.

That assumption is getting harder to rely on.

Meta attempted to invoke Section 230 to have the New Mexico case dismissed. The judge denied it. The reasoning is the most important part of this verdict: the lawsuit focused not on content created by users, but on Meta’s own internal decisions about how their platform was designed, how content was curated, and how the company responded to warnings from both external experts and its own employees, as presented in evidence at trial. That is a different legal category entirely, and one that Section 230 does not protect.

This is not an isolated ruling. Across the United States, courts and legislators are increasingly looking at the gap between what platforms say they do to protect users and what they do in practice. The New Mexico verdict is the first of its kind, but it is unlikely to be the last. A parallel case in Los Angeles, involving Meta, YouTube, Snap and TikTok, is currently before a jury. Snap and TikTok have already settled.

For platform leaders, the practical implication is clear: “we are just a platform” is no longer a complete answer, legally or reputationally. Juries, regulators, and the public are increasingly capable of distinguishing between genuine investment in safety infrastructure and the performance of it.

The $375 million penalty was calculated at the maximum rate of $5,000 per violation under New Mexico’s consumer protection law. That number will grow as additional penalties are sought in the next phase of proceedings. And here is the part that should concentrate the mind of every platform leader: this is not federal action. This is one state. The United States has fifty of them, each with its own consumer protection laws and its own attorney general capable of bringing exactly this kind of case. New Mexico just showed them how.

Two Approaches to a Hard Problem

None of this is an argument that keeping platforms safe is easy. It is not. The volume of content moving through any large platform is genuinely staggering, bad actors are persistent and adaptive, and the line between aggressive moderation and overreach is one that trust and safety teams navigate every day.

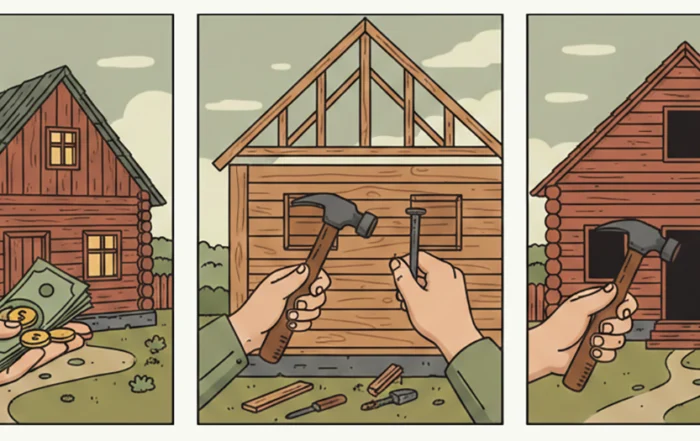

But the Meta case draws a reasonably clear line between two very different approaches to that difficulty.

One approach treats harm as an inevitable byproduct of scale, invests in automation that produces the appearance of action, and hopes the legal and reputational exposure stays manageable. The other treats safety as an infrastructure problem, one that requires the same serious engineering attention as performance, reliability, or revenue.

The gap between those two approaches shows up in specific decisions. Did your AI moderation produce actionable signals, or noise? When your own team raised concerns, did those concerns change anything? When you made a product decision that affected safety, did you build compensating mechanisms, or ship and move on?

These are not questions regulators invented. They are the questions a jury of ordinary people in New Mexico just spent seven weeks learning to ask.

At Aiba, we build trust and safety infrastructure for platforms that want to answer those questions well. That means AI moderation calibrated to produce signal rather than volume, human oversight built into the process rather than added after the fact, and audit tools that give platforms genuine visibility into what is happening in their communities.

The $375 million verdict is not just a number. It is a record of what was known, when it was known, and what was decided anyway. Every platform leader reading this has the opportunity to write a different record.